Neural Learning and Intelligent Systems

Revealing key concepts of learning algorithms in biological and artificial neuronal networks

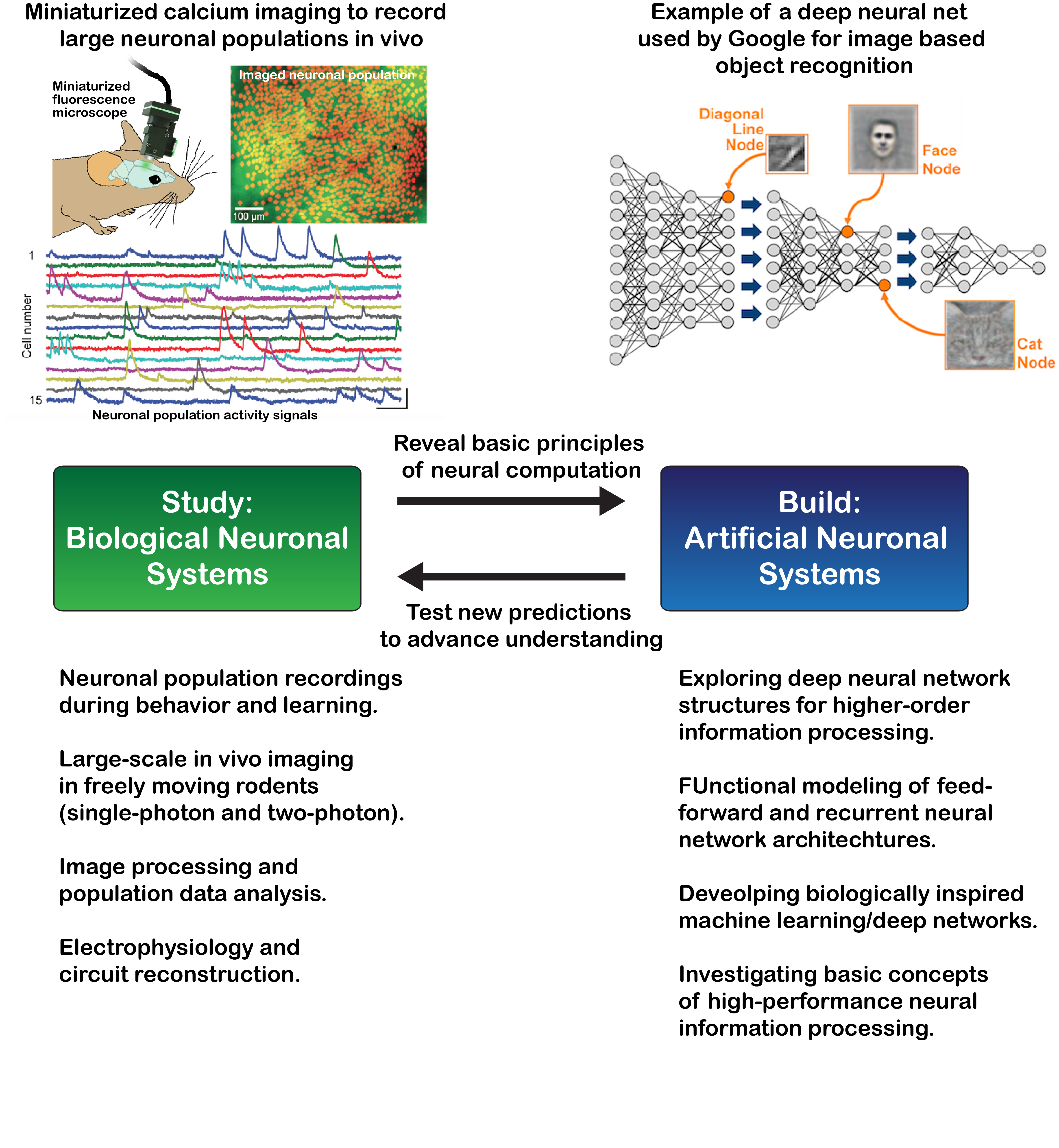

To learn new things our brain has to change its internal neuronal activity pattern that encode information across large neuronal ensembles and multiple brain areas. At the same time neuronal activity pattern in the brain are often unreliable, multi dimensional and highly complex, which makes it challenging to investigate learning related changes in network information processing. To gain a mechanistic/analytical understanding of neuronal network learning principles we use a two-fold research strategy. As starting point we characterize learning induced changes of neuronal ensemble activity in mice using in vivo population Ca2+-imaging, high-throughput image processing and data analysis methods that are commonly used in machine learning. In parallel we aim to develop biologically inspired multi-layer artificial neuronal network models (ANNs) that exhibit similar information processing and storage capabilities as previously observed in the real biological networks. The process of reverse-engineering neuronal network function on a very abstract level is thereby crucial to understand the fundamental underpinnings that determine learning induced changes of neuronal activity pattern. Moreover, the ANN reverse-engineering and development process will help to derive hypothesis of how individual network components (i.e. lateral inhibition, recurrent connection rate, etc.) shape learning induced changes of neuronal population activity and internal information coding. We can then go back to the experiment and test if these hypotheses hold in vivo for real biological neuronal networks.

Group website

Please find the group website here: